Typical query runtime

Vector lookup against an indexed library of a few thousand clips. Stays sub-second well past 100,000.

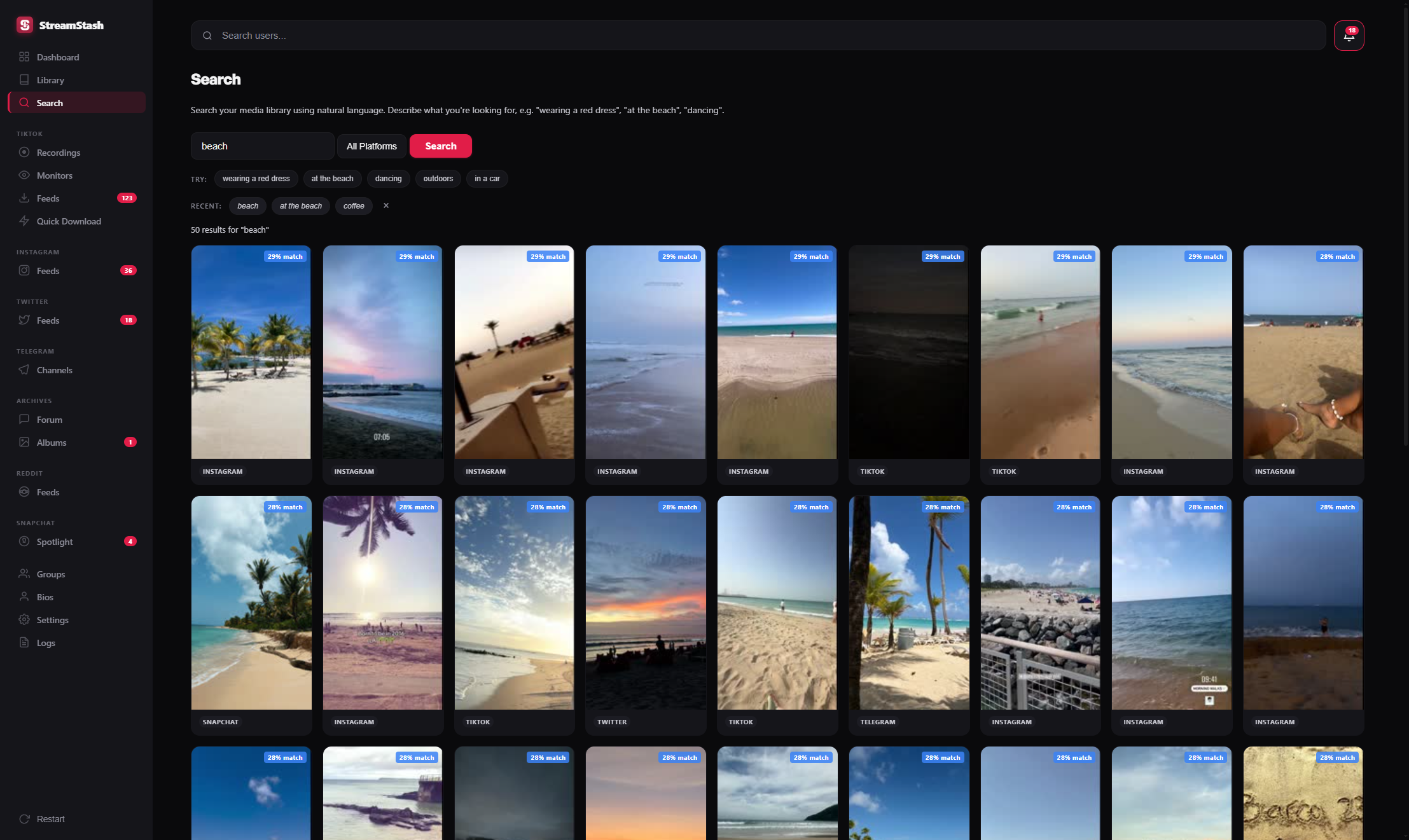

Type a phrase. Get ranked thumbnails in well under a second. The model lives on your machine, the index lives in your SQLite database, and your archive never leaves the building.

Try red dress at the beach · skateboarding at nightThree numbers do most of the explaining. They come from a real install on a mid-range desktop, not a marketing benchmark.

Vector lookup against an indexed library of a few thousand clips. Stays sub-second well past 100,000.

First-time cost on an RTX 3060. New saves are embedded in the background as they arrive.

No keys, no usage tier, no cloud. The model file sits on disk and the embeddings sit next to your library.

When a clip lands in your library, it's passed through a local CLIP model. The model returns a vector — a numerical fingerprint of what's visible in the frame.

Type a phrase. CLIP encodes the text into the same vector space as your frames, so "person in red at the beach" lands near the frames that show that scene.

The closest matches surface as ranked thumbnails with a confidence score. Click one to play the underlying file from your library.

Most archives are searchable in name only. AI search reads the pixels — which is the part you remember.

You know there's a clip somewhere with someone in a red dress on a beach. The filename has none of that.

VID_20240715_142318.mp4 tiktok_@creator_7350421987651.mp4 IG_reel_C8wPx-7tNUk.mp4No match. You scroll for fifteen minutes, give up, and re-save the post from the original platform.

The query goes through CLIP locally and lands next to the frames that actually look like the scene.

red dress at the beachSame layout, same scoring, same local-first execution. This is the page in StreamStash, not a stylised mock.

Power adds AI search, all eight platforms, unlimited monitored feeds, unlimited live monitors, and cross-platform deduplication. One-time purchase, all updates included, runs entirely on your machine.

From £40 · one-time, lifetime updatesPower unlocks AI search and the full archiving stack. One-time purchase, runs on your machine, every update included.

See Power pricing